Summary and Motivation of the SPP 1500

Motivation

On an average day, each of us has an implicit contact with a large number of electronic systems, that we are often not even aware of. This is almost independent of what job we have and what lifestyle we live and applies to virtually every individual in a highly developed society. For example, let’s consider somone driving a car to work in the morning. The car is required to be safe, i.e. we expect it to take certain actions that ensure avoidance of an accident or, if an accident occurs, we expect it to reduce the negative impact to a minimum. These and diverse other features are facilitated by around 100 electronic systems in a modern car. Another everyday example is healthcare. A patient seeing a medical doctor might receive an X-ray screening or other scans. A system might then automatically analyze the scans for potential disease patterns, thereby aiding the doctor in the diagnosis. Or, one might carry life sustaining devices like hearing aids, or pace makers with us. Another example is our cell phone that we carry with us and probably use multiple times a day to conduct business, to talk to our families, etc. What we typically do not think of when we talk via a cell phone is that for a single second of talking time hundreds of millions of computational operations are executed on that cell phone and the backbone infrastructure, which belongs to the most complex technical systems. Again, a large number of electronic systems (most of them are not visible to us and not even part of the cell phone) makes all this possible. But what happens if these systems do not work dependably, if they temporarily or completely fail or simply do not function as specified? If, for example, the ABS (Anti-lock Braking System) of a car is delayed for some milliseconds? If a pace maker is providing the pace at the wrong frequency? If a call is dropped when attempting to make an emergency call?

On an average day, each of us has an implicit contact with a large number of electronic systems, that we are often not even aware of. This is almost independent of what job we have and what lifestyle we live and applies to virtually every individual in a highly developed society. For example, let’s consider somone driving a car to work in the morning. The car is required to be safe, i.e. we expect it to take certain actions that ensure avoidance of an accident or, if an accident occurs, we expect it to reduce the negative impact to a minimum. These and diverse other features are facilitated by around 100 electronic systems in a modern car. Another everyday example is healthcare. A patient seeing a medical doctor might receive an X-ray screening or other scans. A system might then automatically analyze the scans for potential disease patterns, thereby aiding the doctor in the diagnosis. Or, one might carry life sustaining devices like hearing aids, or pace makers with us. Another example is our cell phone that we carry with us and probably use multiple times a day to conduct business, to talk to our families, etc. What we typically do not think of when we talk via a cell phone is that for a single second of talking time hundreds of millions of computational operations are executed on that cell phone and the backbone infrastructure, which belongs to the most complex technical systems. Again, a large number of electronic systems (most of them are not visible to us and not even part of the cell phone) makes all this possible. But what happens if these systems do not work dependably, if they temporarily or completely fail or simply do not function as specified? If, for example, the ABS (Anti-lock Braking System) of a car is delayed for some milliseconds? If a pace maker is providing the pace at the wrong frequency? If a call is dropped when attempting to make an emergency call?

Reliability, Dependability and Aging

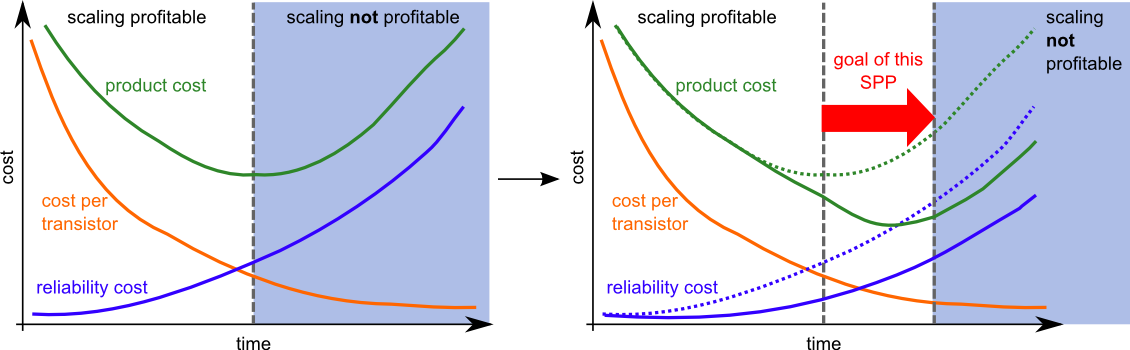

We rely on all this to happen as expected. And in fact, in many cases the trust in the dependability of the discussed systems is justified. More than 40 years in research and development have led to a silicon process (this is the technology that enables to integrate many transistors on an integrated electronic circuit) that is not only dependable, but also complex and thus facilitating all necessary computations in time, at affordable prices, etc. However, there is a major new problem: the electronic systems we talked about are expected to become inherently undependable in the near future when migrating towards new technologies! Simply said, the reason is that the feature sizes of the basic switching devices on the integrated circuits are becoming so small (only several tens of nanometers and less) that the fabrication process cannot be entirely controlled resulting in faulty switching devices. But even after fabrication, the basic switching devices are much more susceptible to the conditions of the surrounding environment like heat, exposure to cosmic rays, and they more and more tend to ‘age’ (i.e their electrical behaviors change over time).

Challenges

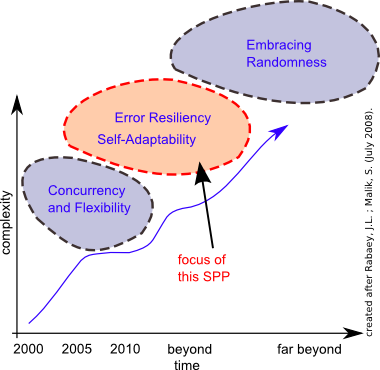

The International Technology Roadmap for Semiconductors (ITRS) states the five challenges 'Design Productivity', 'Power Consumption', 'Reliability', 'Interference and Manufacturability'. This SPP addresses four of these major challenges. There is no technology in sight that might solve the inherent undependability problem at the level of the basic switching devices. This SPP is fully in line with the latest version of the ENIAC Strategic Research Agenda which states in its edition from November 2007: "Emerging devices are expected to be more defective, less reliable and less controlled in both their position and physical properties. It is therefore important to go beyond simply developing fault-tolerant systems that monitor the device at run-time and react to error detection. It will be necessary to consider error as a specific design constraint and to develop methodologies for error resiliency, accepting that error is inevitable and trading off error rate against performance (e.g. speed, power consumption) in an application-dependent manner". A possible way to approach this major problem is: accepting the non-dependability at the level of the basic switching devices but making sure that this non-dependability does not propagate to the user of the respective system. The electronic system in a future ABS of a car might have (temporary or permanently) faulty basic switching devices (e.g. transistors, carbon nano tubes), but the ABS system as a whole should react on time and work in a dependable manner. The crux is a paradigm shift: to build dependable systems with non-dependable basic switching devices.

Outlook

So, what does it take? So far, the implicit assumption has primarily been that these systems and their basic switching devices work in a dependable manner. The circuit design techniques, the computer architectures, the operating systems, the application software etc. are all implicitly based upon this assumption. But again, this is not true any longer. This problem is currently advocated by leading researchers around the world. Hence, almost everything from the physics of the circuits up to the application software needs to be re-thought from ground up: computer architectures might need to be changed, so as application software design, operating systems, and design methodologies. Dependability will become a major design constraint as 'Low Power Design' became years ago, which led to new design approaches and architectures.